For the past few years through the robotics club at my school, I have participated in the IEEE Arduino Challenges. These are complex design and programming challenges that will test the skills of the participants – high school students. In previous years, I have done the IBM LRT Detour challenge which involves line following, obstacle avoidance, and maze solving algorithms, as well as the Green Arm Challenge to detect, identify, and sort colored blocks.

This was my second year of the Green Arm Challenge, and through the last few competitions, I have learned so much – an introduction to graph theory with maze solving algorithms, fusion 360 assemblies, and especially time management (it helps to have a working robot on competition day!).

Now on to the competition. The goal of the competition is to detect, identify, and sort 3D printed coloured blocks, picking them up from one area, and placing them in other areas based on their color. The complete rules are available here. The recommended hardware (according to IEEE) is on that page as well, but of course I made some of my own adjustments.

As with most open-source hardware, there are always some modifications required. So let’s go over what I did:

Arm and Servos: The base platform is a MeArm – an open-source 4DOF robotic arm made by phenoptix. All the instructions and the laser cutting files are available on their instructables page here. The original one was purchased from robotshop as a complete kit for way more than what I would be willing to spend. After assembling it according to their instructable, the acrylic on acrylic rotation points were sticking a fair bit, so we tried putting nylon washers between the joints. Unfortunately, that did not solve the problem. It could have been because the bolts were too tight and threading directly into the acrylic, but if loosened, the robot would be too unstable. To fix all those problems, I downloaded the dxf files from their instructables page, removed the self-threading screw holes (as bolts and nylock nuts will be used), strengthened a few of the parts, and used the laser cutter at the Imagine Space in the Nepean Centrepointe Library to cut everything out of 3mm MDF. This arm was used in the first year of the Green Arm Challenge, but the next year I redesigned it again because it was still pretty flimsy. This time it used bigger servos (MG995) for the main rotation points (shoulder and elbow) because the smaller SG90 servos were a little too slow and not precise enough to make the new laser time of flight sensor worth it. I also modified the base of the robot to include a teflon rod in a circle around the base rotation to eliminate wobble of the whole robot by providing extra support at the front and back of the robot, while not sacrificing any speed or accuracy. In fact, it actually increased the accuracy of the height and distance of the robot because the wobbling was eliminated.

Claw Modifications: As for the claw, some modifications were required for this as well. The original acrylic and MG90S servo claws had the same rotation problems as earlier – they would get stuck. With the laser cut MDF wood version, it would not grip hard enough (this would also have been a problem with the acrylic version, had it not been getting stuck). Blocks would often swing out of the claw because there were only 2 points of contact on the block, and blocks would also often be dropped because it was not gripping hard enough.The way that this claw works is also not the best – the servo gets sent to a specific position, and in order to grip hard enough, the servo has the be trying to reach a position that it cannot reach because of the block it is gripping, and ends up grinding the servo gears and heats it up – obviously not ideal. So, I added some current limiting to the servo in order to avoid grinding the gears and heating up, but with this method the servo does not grip as hard. So, I also redesigned the claw to have 6 points of contact on the blocks rather than the original 2. I also put elastics between the contact points, in order to provide some extra grip on the block. Once the servo angles for opening and closing the claw were properly calibrated, a block was never dropped or swung out again.

Ultrasonic Distance sensor: To find the blocks, we had to use some sort of distance sensor. The first year, that sensor was an HC-SR04 ultrasonic sensor. Obviously not ideal, but that’s what we had to work with. Ultrasonic sensors are not ideal to be detecting the blocks the 45 degree ‘loading dock’ area, as their detection cone is around 30 degrees wide. So, any blocks it sees could be anywhere within a 30 degree angle. To try and fiw this, we ‘swept’ across the area, measuring the distance at each degree and found the lowest distance. Then we went to the angle that the lowest distance was found at and took off a loosely guessed 5 degrees to account for sensor error as it is sweeping across (it will see the block before it is actually in front of it). However, this method proved to be unreliable. Some other methods that we tried were desoldering the ultrasonic transducers from the board, and mounting the transmitter under the claw, pointing at a 45 degree angle upwards, and the receiver above the claw, so that the sound from the transmitter will reflect off the block only when it is in front of the claw. With this, we would have to sweep across the area, but also move the claw further from the base to detect the blocks that are further away. Unfortunately, mounting the ultrasonic transducers in this location made it so that the claw could not be lowered enough to grab the blocks. The transmitter would hit the platform before the claw was low enough to grab the block. Another method we tried was attaching circular cylinders to the front of the transducers in the original location (at the base of the robot), in order to direct the sound waves coming out of the sensor. While this method worked very well for objects further away (greater than 30cm probably), it does not work for close objects, as the reflected sound waves must be coming back parallel and a few cm displaced in order to be detected, but instead come back almost right into the first transducer. Long story short, the HC-SR04 ultrasonic sensor is not suitable for this application.

VL6180X and VL53L0X ToF distance sensors: A much better suited sensor to detecting blocks at smaller distances is the VL53L0X or VL6180X Time of Flight laser distance sensor. These are ideal mainly because they use light beams instead of ultrasonic sound waves to detect objects. As a result, these have a very small detection cone (it is just a beam of light), and will only detect an object when it is actually in front of it. These sensors are also made for the range that we are using them in. The VL53L0X has a distance of 3-200mm with an accuracy of 1mm so at first, this is the sensor that we went with as the maximum distance was perfect, and we were looking for the highest accuracy possible. When testing it however, this sensor was wildly inaccurate (off by 1-2cm) at distances longer than about 13 or 14 cm, getting worse as the distance grew closer to 200mm. After paying an outrageous amount for a VL6180X from amazon (instead of my usual Aliexpress orders – this one had a deadline), we were much more satisfied with the results. The VL6180X is made for distances from 30-2000mm, with a slightly coarser accuracy than the VL53L0X. This sensor matched exactly what we needed, and worked perfectly during testing.

TCS3200 TCS230 Color Sensor and mounts: These color sensors are the bog-standard color sensors in the Arduino community because they are so cheap. The one main problem with them is that they are very sensitive to external light. If any of the lighting conditions in the room change, they likely have to be recalibrated. The other problem that this creates is a very limited and precise range. I was able to get mine working in a roughly 0.5 – 1.5cm range. On the pcb they are mounted on, come 4 5mm white LEDs to illuminate the object. As the sensor gets closer to the object the accuracy increases, and these LEDs were getting in the way. So, they were removed from the board and mounted above the sensor. To get the most accuracy and consistency as possible, the sensor was mounted as close as possible to the blocks – in the crevice where the claw closes, using a custom 3D printed mount. This took a few revisions in order to get the optimal distance, and light positions. In the first revisions, the 4x5mm LEDs were mounted above the claw servo, but in the final version, a 1W LED with constant current driver made from 10W power resistors (what I had on hand) and some transistors to allow the Arduino to control it. This higher power LED was chosen to provide more light in general, as well as more directed light. This way, the small deviances in the distance from the sensor will matter less, because there is still enough light getting to the sensor.

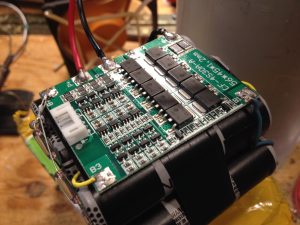

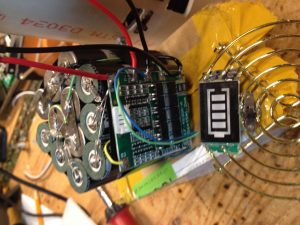

Power Considerations (especially with sensor shield): To power all this, and keep the wiring manageable, a Sensor Shield for the Arduino Uno was used. In order to run the servos at the maximum possible speed, they were driven from 6V. Unfortunately, this created some complications with the Servo Shield. The external power terminal block was connected directly to 5V, and was routed around the board as 5V, including into the Arduino’s 5V pin. Driving this external block with 6V would clearly create some problems. So, some PCB traces were cut and re-attached with jumper wires in other places in order to route the terminal block input to the Arduino’s VIN pin, as this pin is regulated. From the terminal block, the 6V goes to the servo/sensor headers on the shield. The 5V pin on the Arduino was connected to all other devices on the shield – I2C, SPI, and other breakout pins. Now that the servos, and only the servos, were powered with 6V, we also have to take into account the current draw. With the larger MG995 servos having a max current draw of 2 or 3A, this robot can draw up to 8A under full load. However, that will never happen as the movement, claw grabbing, and light pulsing never never happen all at once. Also, the large servos will never draw their max current as moving a small robotic arm is not problem for them. As a power source, we went with a Li-ion battery custom-made from recycled laptop batteries (either 2S2P or 3S2P depending on which one was used) because there were tons readily available. To get the voltage down to 6V, a 5A 75W buck converter was used. We could have gone with a much beefier 9A module, but given that the large current draws for this robot are never sustained, and 5A buck converter is adequate. The 6V was fed directly in to the sensor shield’s external power terminal, and the Arduino was powered through the VIN pin.

Control Library: The last main part of the build was programming – which was a fairly interesting problem. We needed to figure out a way to translate a given height, distance and angle of the claw in order to travel to a specific point and grab a block. There is an arduino library called mearm IK by yorkhackerspace on Github that works very well to control the meArm in 3D space, however I did not really like the way that you had to use it and the calibration instructions were not well documented. So I decided to make my own algorithm to control the height, distance and angle of the claw. This height, distance, angle option was chosen because block distance is a distance, height off the ground is also a distance, and the field was a semi-circle – so an angle for that makes sense. The angle of the base is controlled via a servo, which makes that part real easy. A bit of calibration to determine the maximum and minimum angles for each 45 degree area (which is not really 45 degrees on the servo) was everything required. Now for the height and distance, this was a little more complicated. After drawing out a large scale version of the robot, and some careful analysis, I noticed that the angles of the servo arms can be computed based on a cosine law. The 2 links having the same angle made this calculation a little easier as well, because we have an isosceles triangle. If I can find the messy drawings I made, I will include them here. Converting this to the servo angles proved to be a little more difficult. We had to map the angle based off of an imaginary bar parallel to the ground at the height of rotation of the servos. Slowing down the servos was also a major consideration. If the servos have too much jerk, then the block is more likely to fall out of the claw while it is moving. To limit the jerk while still maintaining maximum speed, an algorithm that found the distance to the start and end points of the servos, then slowed the movement of the servo down as it was just starting to move and as it approaches the end of its movement was implemented. In theory, this would have worked, but it seemed that the movement commands were getting ahead of the servo and as a result the decelaration as it approached the end point never happened. In the end, all the servo movements were slowed down because that was fast enough for our purposes. The first year, I put all this code to calculate the angles in the main program, and suffered through the endless amounts of scrolling. The second time around, I was a little smarter about it. I created my own library to with all the control code in it, which made the main program much neater and easier to understand.

Final Results: When the day of the competition came, we were fairly confident, having a functional robot that worked most of the time. We had even had extra time to program it to not place blocks down on top of each other if multiple blocks of the same color were gathered. After a bit of tuning to the proper layout of the field (different places to put the green and blue colored blocks), the robot was able to reliably find, retrieve, and sort the colored blocks.